I want to run Hyper-visor and other software (SQL Server 2008) on my laptop. I consider running Team Foundation Server, Windows XP, SQL Server versions, old Visual Studio versions, Visio, BizTalk and others inside Hyper-V images on my laptop. At the same time I want the laptop to function as normally (internet explorer, media player, office, etc).

Hyper-V runs on 64-bit Windows Server 2008. The machine needs to support hardware-assisted virtualization and data execution prevention, and these must be enabled in BIOS.

- Download driver packages for Vista x64 and save on flash-drive

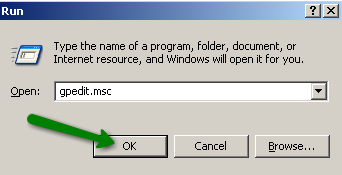

- Install Windows Server 2008

- Rename computer

- start control userpasswords2 and enable automatic logon

- Install drivers and enable wireless service (not automatic on server)

- Install Intel Graphics sp37719

- Install "Integrated module with Bluetooth wireless technology for Vista" SP34275 don't care if it says "no bluetooth device"

- Replace wireless driver: \Intel Wireless sp38052a\v64

- Add feature "Wireless..."

- Install Intel Graphics sp37719

- Connect to internet

- Start updates

get updates for more programs

automatic

important updates, only - View available updates

Add optional: Hyper-V - Install Hyper-V

- Add role Hyper-V

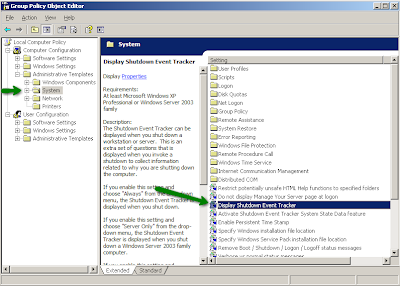

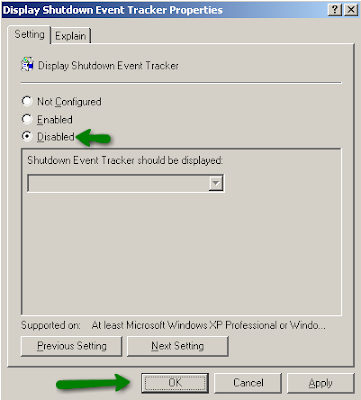

The machine is now a Hypervisor. Next steps are to get Windows Server 2008 to act more like a client OS.

- Install other drivers

- SoundMAX SP36683

- SoundMAX SP36683

- Install Feature "Desktop Experience" (Media Player, etc)

- Create shortcut "net start vmms" for starting Hyper-V and "net stop vmms" for stopping Hyper-V

- Set startup for service "Hyper-V Virtual Machine Management" to manual

- stop vmms

- Install Virtual PC 2007 (in case I want wireless network from a vm)

- Enter product key and Activate windows

Since I have a working version of Windows, this is the time to activate, next step would ideally be to install office. I don't have office handy (am on vacation), so I install the viewers for Word, PowerPoint and Excel

I am now ready to install Visual Studio and the servers, first out is SQL Server 2008 rc.

- Set up IIS (with all the iis6 stuff)

- Set up Windows SharePoint Services 3.0 x64 with Service Pack 1 to run "stand-alone"

- (optionally) set up Windows SharePoint Services 3.0 Application Templates – 20070221

- Create user ServiceAccount to run all the sql server services

- Install SQL (complete) with RS in SharePoint integrated mode

- Open firewall (http://go.microsoft.com/fwlink/?LinkId=94001)

open tcp 1433 (engine), 2383 (ssas), 80 (sp), 135 (ssis), 443 (https), 4022 (service broker)

udp 1434 (ssas)

and "notify me when new program blocked"

Now get all updates.

Next step is to install Visual Studio, which should be straight-forward.

OK, I'm off to the beach,

Gorm